The previous 12 months has seen main advances in Massive Language Fashions (LLMs) equivalent to ChatGPT. The flexibility of those fashions to interpret and produce human textual content sources (and different sequence knowledge) has implications for folks in lots of areas of human exercise. A brand new perspective paper within the journal Neuron argues that like many professionals, neuroscientists can both profit from partnering with these highly effective instruments or danger being left behind.

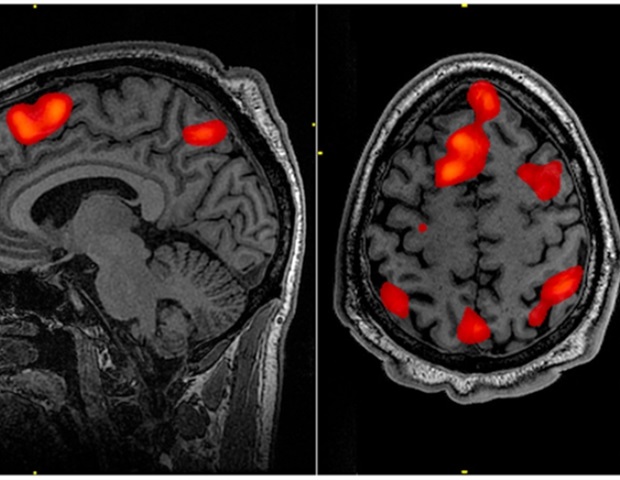

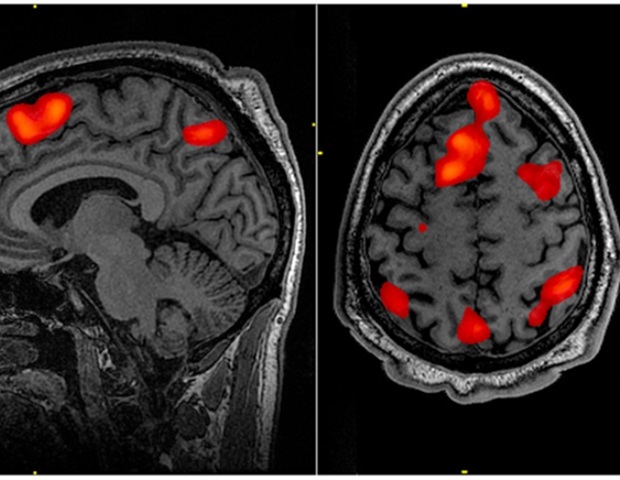

Of their earlier research, the authors confirmed that vital preconditions are met to develop LLMs that may interpret and analyze neuroscientific knowledge like ChatGPT interprets language. These AI fashions may be constructed for a lot of several types of knowledge, together with neuroimaging, genetics, single-cell genomics, and even hand-written scientific reviews.

Within the conventional mannequin of analysis, a scientist research earlier knowledge on a subject, develops new hypotheses and exams them utilizing experiments. Due to the large quantities of information out there, scientists usually give attention to a slender discipline of analysis, equivalent to neuroimaging or genetics. LLMs, nevertheless, can take in extra neuroscientific analysis than a single human ever might. Of their Neuron paper, the authors argue that in the future LLMs specialised in various areas of neuroscience might be used to speak with each other to bridge siloed areas of neuroscience analysis, uncovering truths that may be inconceivable to seek out by people alone. Within the case of drug improvement, for instance, an LLM specialised in genetics might be used together with a neuroimaging LLM to find promising candidate molecules to cease neurodegeneration. The neuroscientist would direct these LLMs and confirm their outputs.

Lead creator Danilo Bzdok mentions the likelihood that the scientist will, in sure circumstances, not all the time be capable to totally perceive the mechanism behind the organic processes found by these LLMs.

We now have to be open to the truth that sure issues in regards to the mind could also be unknowable, or no less than take a very long time to know. But we would nonetheless generate insights from state-of-the-art LLMs and make scientific progress, even when we do not totally grasp the best way they attain conclusions.”

Danilo Bzdok, Lead Creator

To appreciate the total potential of LLMs in neuroscience, Bzdok says scientists would want extra infrastructure for knowledge processing and storage than is obtainable at the moment at many analysis organizations. Extra importantly, it could take a cultural shift to a way more data-driven scientific method, the place research that rely closely on synthetic intelligence and LLMs are revealed by main journals and funded by public businesses. Whereas the normal mannequin of strongly hypothesis-driven analysis stays key and isn’t going away, Bzdok says capitalizing on rising LLM applied sciences may be vital to spur the following technology of neurological remedies in circumstances the place the previous mannequin has been much less fruitful.

“To cite John Naisbitt, neuroscientists at the moment are ‘drowning in info however ravenous for data,'” he says. “Our potential to generate biomolecular knowledge is eclipsing our potential to glean understanding from these programs. LLMs supply a solution to this downside. They can extract, synergize and synthesize data from and throughout neuroscience domains, a process that will or could not exceed human comprehension.”

Supply:

Journal reference:

Bzdok, D., et al. (2024) Knowledge science alternatives of huge language fashions for neuroscience and biomedicine. Neuron. doi.org/10.1016/j.neuron.2024.01.016.